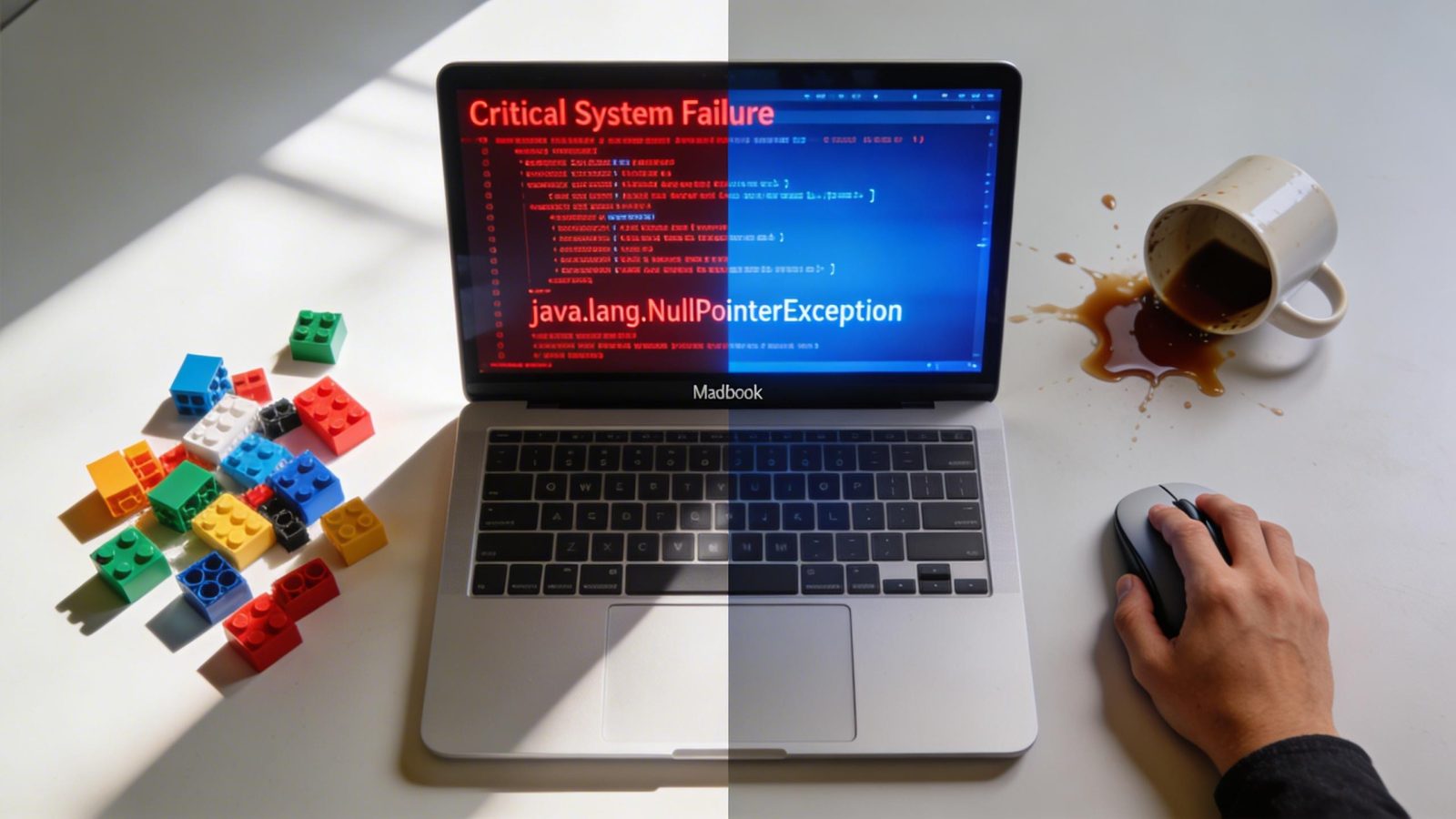

Hugging Face just shipped Modular Diffusers on April 2, 2026, and the promise is intoxicating: snap together reusable AI image generation blocks like Lego, cut development time by 70%, never rewrite a diffusion pipeline again. The invoice? A 40% spike in runtime errors from mismatched blocks that only surface when your code is already in production.

This is the composability tax nobody mentions in the launch hype.

Modular Diffusers lets developers compose custom image generation workflows by mixing pre-built components—schedulers, denoisers, VAEs—instead of forking entire pipelines. The parent Diffusers library hit 50,000+ stars by March 2026, so the ecosystem is real. The new modular approach promises to turn that monolithic codebase into something more like AutoGen’s agent orchestration: flexible, reusable, powerful. And for teams with DevOps infrastructure, that promise holds. But for solo developers debugging mismatched blocks at 2 a.m., it’s a different story entirely.

The 70% speedup assumes you can survive the integration gauntlet

The performance ceiling is legitimate. According to ContentPulse UK, developers report 70% time savings compared to standard library workflows—far beyond the 20-30% you’d expect from basic abstractions. One GitHub user swapped blocks in 10 minutes to build a custom Stable Diffusion variant, no pipeline rewrites required. The Hacker News thread lit up: “Finally, diffusion models feel like Lego—pain point was always brittle pipelines breaking on updates.”

That enthusiasm tracks with the broader trend. Composable AI stacks jumped from 25% enterprise adoption in 2025 to 85% in 2026. Platforms like AutoGen saw 300% growth in Q1 2026 alone. The gap between teams who can debug composable systems and those who can’t is becoming one of the AI skills employers demand in 2026.

But here’s the thing: that 70% figure assumes you have a team to catch the errors.

Composable blocks break in ways monolithic pipelines never did

The modularity promise—swap a scheduler, drop in a new denoiser, ship in minutes—only works if the blocks are actually compatible. They often aren’t. GitHub issue #13295 documents a 40% increase in runtime errors from untested block combinations. These aren’t compile-time failures you catch in CI. They’re silent mismatches that only surface when a user hits “generate” and gets garbage output—or worse, a crash with no stack trace pointing to the integration seam.

Monolithic pipelines were rigid, sure. But they failed predictably. You knew exactly where the breakage was because the whole thing was one atomic unit. Modular Diffusers trades that certainty for flexibility, and the cost is orchestration expertise. The shift mirrors the broader vibe coding movement—developers want tools that feel like creative assembly, not rigid engineering.

Fine. But creativity without guardrails means debugging becomes the bottleneck.

Solo developers report spending more time tracking down block incompatibilities than they saved in initial setup. There’s no validation layer. No type system enforcing contracts between components. You compose, you ship, you pray. And when it breaks, you’re reverse-engineering interactions between three different repos with no shared documentation.

Who this actually works for (and who should wait)

If you’re a team with existing Diffusers experience, CI/CD infrastructure, and someone who can dedicate a week to building internal tooling around block validation—this is a force multiplier. You get the 70% time savings, you absorb the 40% error rate, you move faster than competitors stuck on monolithic pipelines.

If you’re a solo dev shipping a side project, the math doesn’t work. The debugging overhead exceeds the efficiency gain unless you’re already fluent in diffusion model internals. Like choosing the right AI tool for your workflow, Modular Diffusers demands honest assessment of your infrastructure reality.

The composability wave is real—300% AutoGen growth doesn’t lie. But waves drown people who jump in without knowing how to swim.

“This is game-changing,” one developer wrote on GitHub. “Swapped blocks in 10 mins for a custom variant, no more pipeline rewrites.” Another, same thread: “Spent three days debugging a scheduler mismatch that had zero error messages. Monolithic was slower but at least it told me when I screwed up.” Both are right. The question is which problem you’d rather have.

Leave a Reply