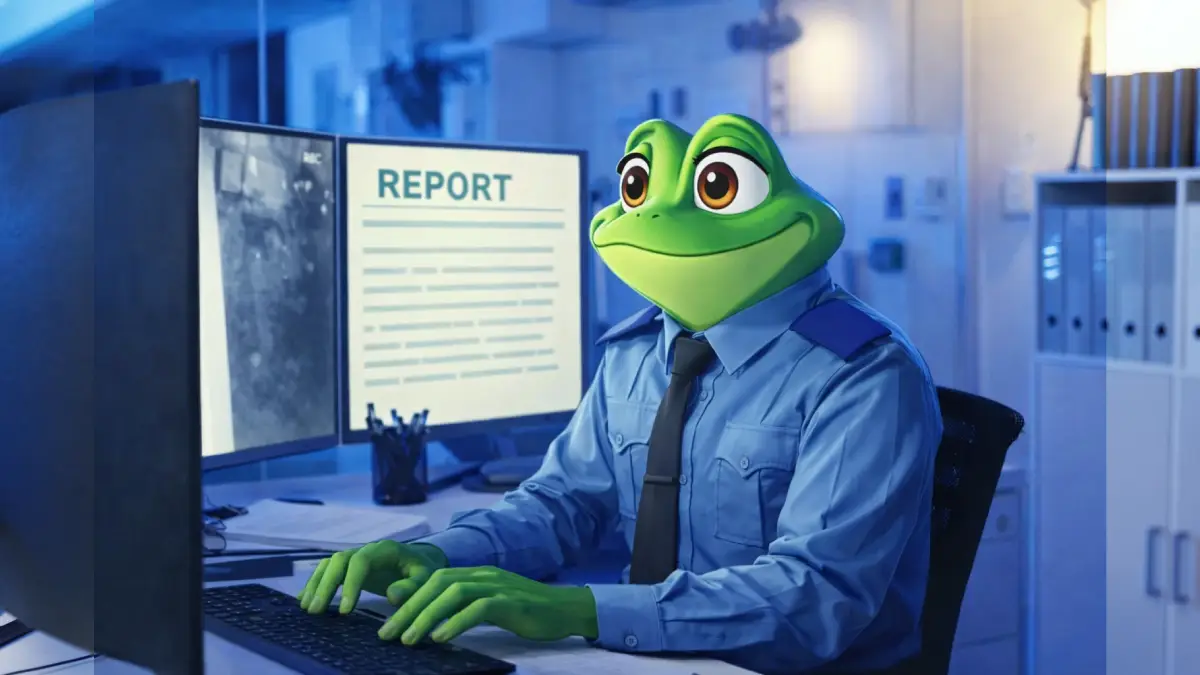

Technology is weaving itself into every facet of daily life, yet at times it takes an unexpectedly whimsical detour. Picture scanning through an official police report and encountering a line that claims an officer has morphed into a Disney-style frog. This is not a scene from an animated film—it is instead the latest twist in law enforcement’s ongoing efforts to leverage artificial intelligence for paperwork.

How did a fairytale moment sneak into an official investigation?

In Heber City, United States, police officers have adopted advanced ai tools aimed at streamlining one of their most tedious responsibilities: crafting detailed incident reports. Instead of painstakingly writing these documents by hand after every event, they now feed audio and video from body cameras directly into specialized software.

With the goal of saving time and enabling officers to concentrate more on field duties, two distinct solutions underwent real-world trials. One system originated from a renowned technology company, while another was developed by two young innovators from a prestigious university. Both promised faster, more accurate transcripts derived straight from frontline footage and conversations.

When transcription meets cartoons

The unexpected occurred during a routine trial when an automated report described how a police officer transformed into a Disney frog, seemingly inspired by popular animated films. The ai had picked up background references unrelated to the actual investigation—specifically, snippets from “The Princess and the Frog,” which were apparently audible or visible near the recording environment.

This surreal result exposed a key limitation shared by even the most advanced language models: lacking true real-world awareness, the ai blended fictional content with reality. For anyone reading the report, such magical transformations would seem far more appropriate in a fairy tale than in legal documentation.

The limits of artificial comprehension

What went wrong? Ai excels at linking words, recognizing speech patterns, and processing vast amounts of data within seconds. However, without human-like judgment, it often fails to distinguish genuine facts from fictional cues if both are present in the source material. In this scenario, the mix of police evidence and animated movie content confused the algorithm, resulting in a report more suited to an animation studio than a prosecutor’s office.

Incidents like this underscore the critical lack of contextual understanding in machine-generated text. While large language models possess impressive memory banks, they cannot differentiate between imaginative storytelling and factual reporting unless developers train them to carefully filter such nuances.

Why law enforcement agencies are turning to ai for paperwork

For police officers, accurately documenting incidents remains a demanding aspect of the job. Traditional processes often consume valuable hours that could otherwise be devoted to patrolling streets or investigating cases. By embracing automation, departments seek to accelerate report creation, alleviate administrative fatigue, and enhance productivity across teams.

Producing reliable incident summaries demands capturing details swiftly and in a structured manner—a challenge well-suited for ai-based systems connected to real-time body camera feeds. Many solutions provide features allowing users to review drafts before finalizing, ensuring each officer retains control over the outcome.

Benefits and practical gains

Automated transcription offers more than just time savings. Properly trained systems can boost consistency across reports, reduce manual errors caused by exhaustion, and create searchable archives for efficient evidence management.

- Faster turnaround times for report completion

- Reduced risk of missing vital information due to oversight

- Simplified tracking and retrieval of case histories

- More efficient allocation of personnel resources

These efficiencies enable experienced staff to focus on complex investigations, supporting community safety goals without burdening teams with excessive bureaucracy.

The risks that come with convenience

Every shortcut presents its own hazards, as the infamous frog episode demonstrates. If an electronic draft escapes careful review, it could introduce confusion into legal proceedings or cast doubt on an investigation’s integrity. Even advanced programs are susceptible to misinterpretation when irrelevant or misleading background content slips into the data stream.

To prevent such magical mishaps from recurring in official records, thorough verification steps and robust review protocols must accompany any ai deployment. Law enforcement professionals remain essential by overseeing edits, correcting unusual insertions, and validating final outputs.

Comparing current ai-driven report solutions

Two contrasting approaches led the way in Heber City’s experiment: a seasoned solution from a leading technology provider, and a nimble newcomer emerging from academic circles. While both pursue the vision of seamless documentation, they differ in development philosophy, scope, and perceived reliability.

A brief comparison highlights some distinctions:

| Solution origin | Main features | Potential weak points |

|---|---|---|

| Industry veteran | Advanced integration, robust infrastructure, built for scale | Risk of generic output, dependent on preset algorithms |

| University startup | Agile, customizable, open to rapid experimentation | Less proven in chaotic environments, may need extra filtering |

Both options offer significant potential, especially if adapted to exclude cross-contamination from movies, music, and everyday chatter.

Where is police report automation heading?

Episodes like the accidental frog transformation are certainly attention-grabbing but serve as important reminders that digital assistants require active supervision. More organizations are likely to adopt efficient reporting methods, striving to balance human expertise with technological innovation.

As machine learning teams continue refining context detection and error filtering, the hope is that future automated police reports will focus entirely on fact—not fiction—ensuring confidence in both the process and its results.

Leave a Reply