Are you struggling to distinguish between genuine student effort and automated shortcuts in your classroom? This Turnitin AI review examines how the industry leader integrates machine learning detection to protect academic integrity against generative tools like ChatGPT.

Discover how its 98% accuracy rate and seamless institutional workflow can help you manage modern writing challenges with data-driven confidence.

The essential takeaway: Turnitin has established itself as a cornerstone of academic integrity by detecting AI-generated content with a reported accuracy of 98%. This industrial-grade tool helps institutions identify robotic linguistic structures, protecting the value of academic degrees. After analyzing more than 200 million documents, the platform reports that around 11% of submissions contain at least 20% AI-generated text.

Summary of our opinion on Turnitin AI Review ⭐8.5/10

Turnitin’s AI detection is a formidable gatekeeper for academic integrity. It effectively flags machine-generated patterns without requiring complex setups.

However, it isn’t a magic wand for every classroom. The tool works best as a specialized component within its existing, massive educational ecosystem.

Our 8.5/10 score reflects its impressive 98% accuracy and vast database. This reliability is high, yet we must account for the friction caused by potential false positives in modern student writing.

For educators, the interface is remarkably seamless. It simplifies a complex task but still demands a human touch. Relying solely on “algorithm-only” justice is risky; professional judgment remains your most important asset.

Ready to see how it actually works? Let’s go deep into the technical mechanics and real-world performance of this tool.

Turnitin’s AI detector isn’t just a filter; it’s a mirror reflecting the complex reality of modern student authorship in the age of LLMs.

Pricing of Turnitin AI

Now, let’s talk about the elephant in the room: the cost of keeping things honest.

Institutional models and license tiers

Turnitin does not sell to individuals. It is strictly an institutional game. Feedback Studio and Originality licenses serve as the primary vehicles for this specific technology.

The pricing follows a per-student annual fee structure. This cost varies based on institution size and geographic region. It is a bulk commitment rather than a pay-as-you-go tool.

AI detection is often bundled within these packages. Most universities already possess the necessary infrastructure. This makes the add-on feel almost invisible to the end user.

Our take on the subscription value

Is it worth the tuition fees? For a large university, the reputational risk of becoming a degree mill is far more expensive than software. Integrity has a high price tag.

Compare this to free alternatives. Free tools are merely toys, while Turnitin is industrial grade. It acts as an insurance policy for academic standards and best productivity tools for institutions.

Turnitin AI Review: who is it for?

You might think the answer is “everyone,” but the reality is more specific.

This tool primarily targets higher education administrators and professors. They represent the core user base. These professionals require a scalable method to verify thousands of student submissions every single week.

Secondary education and research journals also rely on it. Journals use the technology to protect their peer-review integrity. It is designed for any environment where original thought is the primary currency.

To be clear, it is NOT for students looking to “check themselves.” Turnitin’s business model intentionally excludes direct student sales. This prevents users from simply gaming the detection system.

- administrators managing academic integrity

- K-12 educators in writing-intensive courses

- Academic journal editors

- Corporate compliance officers for research departments

List of key features

To understand why it’s the market leader, we have to look under the hood at the actual tech.

Technical detection of next-word probability

AI models prioritize the most probable next word. Turnitin’s engine scans for this robotic consistency. It identifies sequences that lack the unpredictable nature.

This differs from traditional plagiarism checks. Old systems matched text against a database. Now, the tool seeks the “ghost in the machine” by analyzing linguistic structures and sentence patterns to find automation.

The software specifically examines text structures. It flags overly perfect transitions and a lack of natural human “noise.” It’s a game of mathematical probability rather than simple copying.

Hybrid text and humanizer behavior analysis

The “humanizer” cat-and-mouse game is real. Students use tools to add artificial noise to AI text. But Turnitin is evolving to catch these specific, predictable “scrambling” patterns.

The tool handles mixed papers with surprising nuance. It doesn’t just give a blanket “yes” or “no” for the whole file. It highlights specific segments, much like how AI models like Whisper process language patterns.

Sophisticated paraphrasing remains a challenge. It isn’t 100% perfect yet. However, the system is getting much better at spotting the underlying, rigid logic of a machine-generated argument.

Integrated similarity and AI writing scores

The dashboard is quite clear. You get two distinct numbers now. One indicates if they copied existing work, while the other estimates if a bot wrote the content.

The workflow for teachers is seamless. These scores live right inside the Feedback Studio side panel. There is no need to open new tabs or upload files multiple times.

Then there is Turnitin Clarity for writing transparency. This feature tracks the actual writing process. It adds a necessary layer of transparency by showing how a document actually evolved.

Interpreting AI detection reports

A percentage is just a number; what matters is how you read between the lines.

Understanding score percentages and false positives

A 20% score may indicate brainstorming, while 80% suggests a direct copy-paste from ChatGPT. These thresholds require very different pedagogical responses.

The 1% false positive rate is a significant burden. In a class of 100, one student might be wrongly accused. This requires managing risks with empathy.

Algorithms often flag non-native speakers unfairly because their writing can appear “robotic.” Use caution, as false positive rates and bias against English learners remain a documented technical limitation.

The role of human judgment in verification

Treat the score as a data point, not a verdict. Use it to start a transparent conversation. Simply ask students to explain their specific writing process.

Compare submissions to previous work. A sudden shift from struggling to “Shakespearean” style suggests the AI score has merit. Context remains everything in these assessments.

Follow institutional policy instead of acting on impulse. Avoid expelling based on numbers alone. Use rubrics that reward the writing process rather than just the final document.

| AI Score Range | Likely Meaning | Recommended Action |

|---|---|---|

| 0-15% | Human writing | Standard grading. |

| 15-50% | AI-assisted | Check for personal voice. |

| 50-80% | Heavy AI usage | Discuss the process. |

| 80%+ | Full AI generation | Follow integrity protocols. |

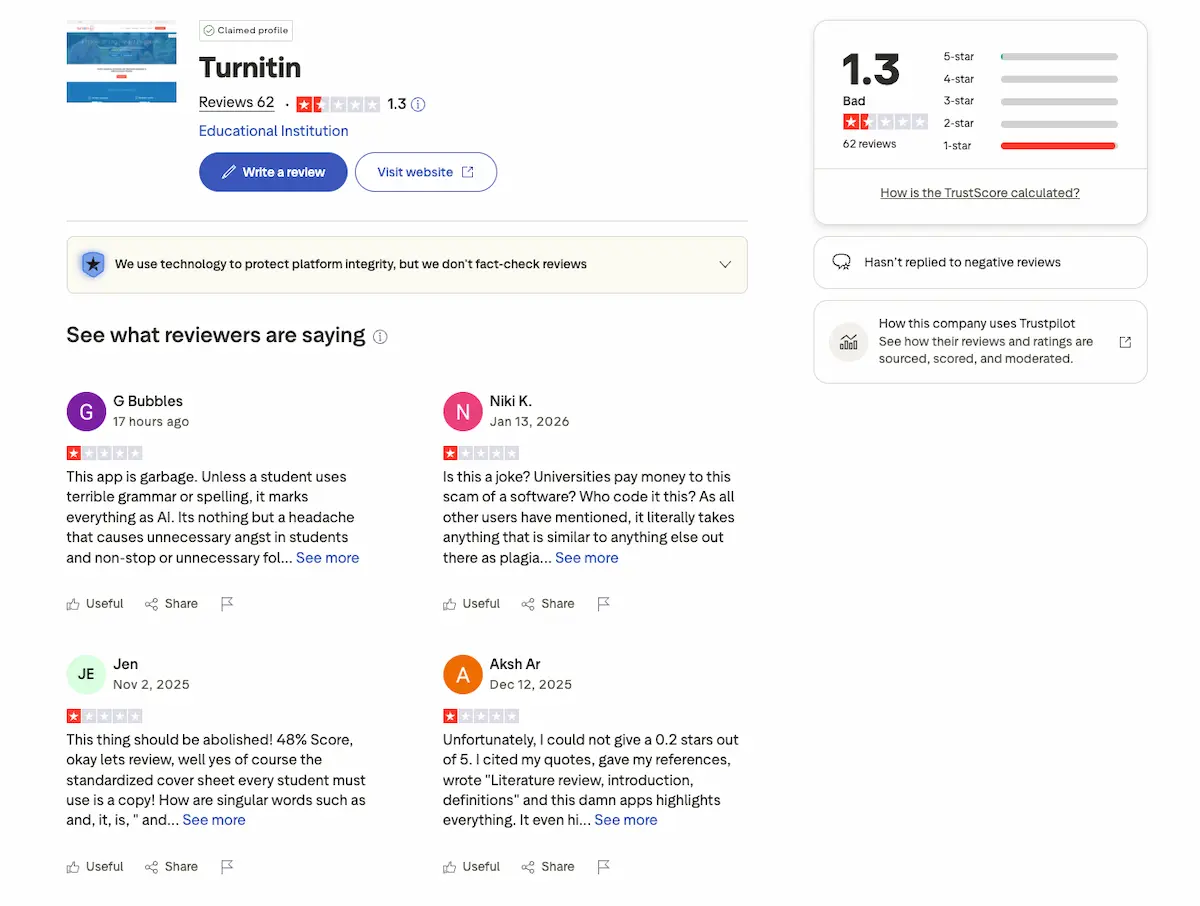

Customer reviews

What are people actually saying on the ground? The feedback is a mixed bag of relief and anxiety.

Many teachers feel relieved. They finally have a tool to combat the flood of AI essays. It restores a sense of fairness in the classroom.

Some universities are pushing back. Institutions like Vanderbilt have paused use due to concerns over accuracy and student impact. They worry about the “guilty until proven innocent” dynamic it creates for students.

Students are generally stressed. Even honest students fear a “false flag.” This tension is changing the teacher-student relationship in ways we haven’t fully grasped yet.

Final verdict

So, where does that leave us?

Turnitin AI detection is currently the best tool for a difficult job. It’s not perfect, but it’s necessary. Use it as a compass, not a judge.

If you manage an institution, it’s an essential buy for modern education strategies. Just make sure your teachers know how to use it with empathy.

Turnitin’s AI detector serves as a powerful gatekeeper for institutional integrity, effectively balancing advanced linguistic analysis with a massive database. By treating these reports as a starting point for dialogue rather than a final verdict, educators can protect academic standards while fostering a transparent, human-centered future for modern writing.

FAQ

How reliable is Turnitin at spotting AI-generated text?

Turnitin reports a 98% accuracy rate in detecting content produced by AI models like ChatGPT. Its technology is specifically designed to identify the highly predictable word sequences typical of Large Language Models, contrasting them with the natural inconsistencies of human writing.

While the system aims to catch about 85% of AI-generated content, it prioritizes safety by keeping false positive rates below 1%. This means the tool is built to be cautious, ensuring that human-written work is rarely flagged incorrectly.

Can Turnitin identify papers that have been “humanized” or edited?

The software is increasingly capable of handling hybrid papers where AI and human text are mixed. Rather than giving a simple “yes” or “no,” the tool highlights specific segments of the document that show robotic patterns, allowing educators to see exactly which parts of a submission are questionable.

However, sophisticated paraphrasing and “humanizing” tools that add intentional noise to AI text remain a challenge. Turnitin continues to evolve its linguistic pattern analysis to spot the underlying machine logic even when students attempt to scramble the output.

What should a teacher do if a student receives a high AI score?

A high score should be treated as a data point for a conversation, not an automatic verdict of guilt. Educators are encouraged to use the score to ask the student about their writing process or to compare the submission against the student’s previous work and established writing style.

Institutions should avoid penalizing students based solely on a percentage. The best approach involves a “human-in-the-loop” strategy where the teacher evaluates the context, the student’s intent, and the specific highlighted sections before making a final determination.

Is Turnitin biased against students who speak English as a second language?

There is a recognized concern that non-native English speakers may sometimes produce more structured or “robotic” prose that triggers AI detectors. Because these students often rely on standard phrases and formal syntax, their natural writing can occasionally mimic the predictability of an AI model.

Educators must exercise extra caution when reviewing scores for ESL students. It is vital to use the AI report as a starting point for pedagogical support rather than an absolute proof of academic misconduct.

Can individual students purchase a Turnitin AI check?

No, Turnitin does not sell its services directly to individuals. The platform operates on an institutional model, selling licenses to universities, K-12 schools, and research journals to ensure the tool is used within an official academic framework.

This business model is intentional; it prevents students from “gaming” the system by running multiple drafts through the detector to see what passes. Students typically only see their reports if their instructor or institution enables that feature within the LMS.

How does Turnitin’s AI detection differ from free tools like GPTZero?

While free tools like GPTZero offer accessibility for individual users and support multiple languages, Turnitin is an industrial-grade solution integrated directly into institutional workflows. Turnitin compares submissions against a massive database of 99 billion web pages and 1.8 billion student papers.

Turnitin’s value lies in its seamless integration with Learning Management Systems (LMS) and its dual-report system, which checks for both traditional plagiarism and AI writing simultaneously. It is built for scale, handling thousands of institutional submissions weekly with high security and data governance.

Leave a Reply