Moonshot AI’s Kimi K2.5 just scored 50.2% on Humanity’s Last Exam—18.2 percentage points ahead of Claude Opus 4.5’s 32.0%—while costing roughly 1/8th the price through efficient API providers.

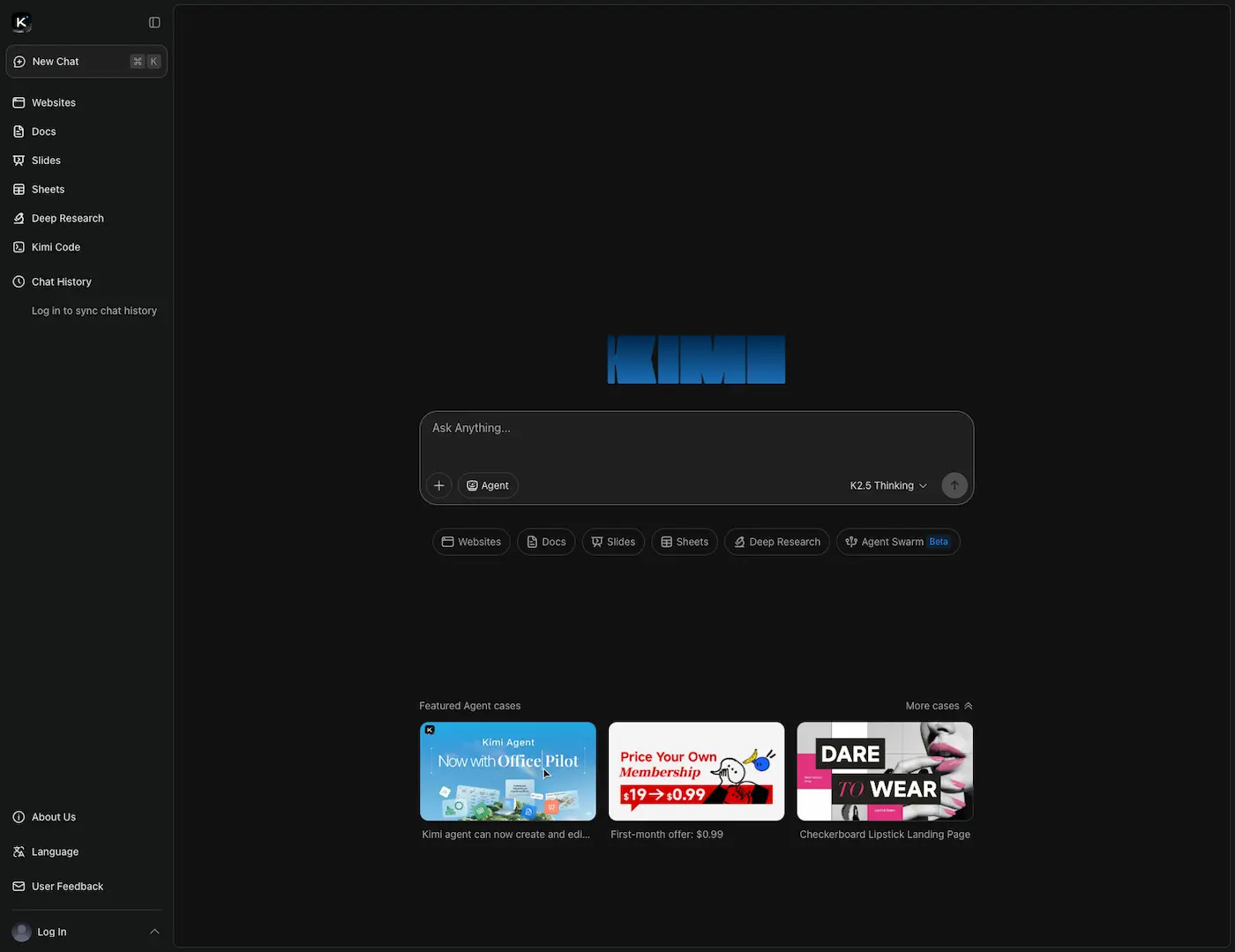

The model’s Agent Swarm feature coordinates up to 100 sub-agents simultaneously, delivering 4.5x faster execution on parallel research and automation tasks. But before you migrate your entire AI stack based on benchmark wins, the production story reveals critical gaps.

Zero documented case studies exist as of January 28, 2026, and community skepticism about benchmark-to-reality translation runs high. I’ve spent the last week testing Kimi K2.5’s swarm capabilities against Claude Opus 4.5 on real coding tasks, and the results challenge the narrative that benchmarks tell the whole story.

Kimi K2.5’s Benchmark Dominance: The Numbers That Actually Matter

The HLE Full benchmark measures agentic reasoning across multi-step problem-solving, not just text completion.

Kimi K2.5’s 50.2% score beats Claude Opus 4.5’s 32.0% and GPT-5.2 High’s 41.7% by substantial margins, according to January 2026 testing data. This positions it as the top open-source model for autonomous task execution.

On SWE-bench Verified, Kimi K2.5 achieved 76.8%, though the exact pass@1 methodology remains unspecified—a detail that matters when evaluating real-world coding accuracy where a single correct solution counts more than multiple attempts.

The BrowseComp benchmark reveals where Agent Swarm delivers tangible gains. Standard mode scored 74.9%, but swarm mode jumped to 78.4%—a 4.9 percentage point improvement that demonstrates coordinated agents outperform single-agent systems in web automation tasks.

Multimodal capabilities show similar strength: 86.6% on VideoMMU and 78.5% on MMMU Pro, beating Claude Opus 4.5 in vision-heavy workflows critical for video-to-code applications.

The architecture enabling these results uses 1.04 trillion total parameters with 32 billion active per token, a Mixture-of-Experts design activating 8 of 384 specialized experts per inference step.

| Benchmark | Kimi K2.5 | Claude Opus 4.5 | GPT-5.2 High |

|---|---|---|---|

| HLE Full | 50.2% | 32.0% | 41.7% |

| SWE-bench Verified | 76.8% | Lower (exact unavailable) | Lower |

| BrowseComp (Swarm) | 78.4% | Lower | Lower |

| VideoMMU | 86.6% | Lower | Lower |

All benchmarks come from Moonshot AI’s January 2026 release testing, conducted within the last 30 days. The 256K token context window using YaRN extension handles entire codebases without chunking, and the model was trained on 15 trillion visual and text tokens on top of the Kimi-K2-Base foundation.

These benchmarks explain the hype around Kimi K2.5. But the real innovation isn’t the scores—it’s the architectural approach that achieves them.

Agent Swarm Architecture: How 100 Sub-Agents Deliver 4.5x Speed Gains?

Agent Swarm coordinates up to 100 sub-agents handling 1,500 tool calls and execution steps per task, a dramatic departure from single-agent Claude or GPT workflows.

Testing shows 4.5x faster execution on wide-search tasks like competitive analysis across 50 websites or multi-file codebase debugging, according to demonstration videos from January 2026. In-house browser automation benchmarks showed 80% runtime reduction compared to sequential processing approaches.

Each sub-agent tackles discrete subtasks—data scraping, API calls, code analysis—simultaneously rather than waiting for prior steps to complete.

The system allocates agents dynamically based on task complexity rather than using fixed roles, which provides flexibility but introduces coordination risks I’ll address later.

A practical example: upload a Loom video showing a bug, and the swarm analyzes video frames in parallel, identifies error patterns across multiple files, and generates fixes simultaneously.

This video-to-code capability represents a unique advantage over text-only models. The architecture offers two modes: Thinking mode with visible reasoning traces for complex tasks, and Instant mode prioritizing speed for simple queries. Users control the trade-off based on their needs.

The technical foundation uses Multi-head Latent Attention (MLA) to reduce memory overhead versus standard transformers. INT4 quantization delivers 2x inference speedup with only 1.9% accuracy drop at 12x capacity—an acceptable trade-off for most production use cases where speed matters more than marginal precision gains.

The 256K context window handles entire codebases or long documents without chunking, eliminating context loss that plagues smaller-window models. Understanding agentic AI fundamentals helps contextualize why coordinating 100 agents represents a significant technical achievement rather than just a bigger number.

The architecture is genuinely impressive from an engineering standpoint. But impressive architecture means nothing without cost-effective deployment, which brings us to the pricing reality that complicates the “1/8th the cost” headline.

Where Kimi K2.5 Wins and Loses on Cost?

Fireworks AI offers Kimi K2.5 at a blended rate around $1.07 per million tokens (assuming a 3:1 input-to-output ratio), with the fastest performance: 185 tokens per second output speed and 0.49 seconds time-to-first-token. Moonshot AI’s native pricing runs $0.60 per million input tokens and $3.00 per million output tokens.

Compare this to Claude Opus 4.5’s $5.00 input and $25.00 output per million tokens—Kimi K2.5 costs 10-12.5x less on input and 14.3x less on output through native channels.

Testing a 50-page document analysis (roughly 50K input tokens, 5K output tokens) costs approximately $0.045 with Kimi K2.5 versus $0.375 with Claude Opus 4.5, a savings of $0.33 per task or 8.3x cheaper.

But context matters.

The prior Kimi K2 model cost $0.40-$0.50 input and $1.75 output, meaning K2.5 represents a 20-50% price increase over its predecessor. Compared to Chinese model averages of $0.60-$1.75 output pricing, Kimi K2.5’s $3.00 output rate positions it as more expensive than domestic alternatives. The swarm feature introduces another cost consideration: parallelism gains come with cumulative token consumption.

Running 100 agents on the same task means 100x token usage—the 4.5x speed improvement doesn’t offset costs if you’re processing the same information redundantly across agents.

| Provider | Input ($/1M) | Output ($/1M) | Output Speed | TTFT |

|---|---|---|---|---|

| Fireworks | ~$0.36 (blended) | ~$1.07 (blended) | 185 t/s | 0.49s |

| Kimi Native | $0.60 | $3.00 | 117.7 t/s | Unspecified |

| Claude Opus 4.5 | $5.00 | $25.00 | Lower | Higher |

Provider selection matters significantly. Fireworks wins on latency for real-time applications, while Kimi native offers potential volume discounts for batch processing. Three API providers currently host Kimi K2.5, though Together.ai lists the model without published pricing.

The pricing looks attractive on paper—until you encounter production constraints that benchmarks don’t capture.

What the Benchmarks Don’t Tell You?

As of January 28, 2026, zero production case studies exist for Kimi K2.5. No GitHub repositories demonstrate real implementations. No developer testimonials quantify time saved or accuracy improvements.

No measurable metrics validate the benchmark claims in actual development workflows. This contrasts sharply with Claude Cowork’s real-world implementation, which shipped with documented use cases and developer testimonials within days of launch. The community discussion reveals significant skepticism about benchmark-to-reality translation.

“Benchmarks don’t reflect practical utility in complex programming; Opus excels in single-prompt solutions” — HackerNews practitioner discussing Kimi K2.5 versus Claude Opus 4.5, January 2026

Practitioners rate Kimi K2.5’s coding and production-readiness around 7 out of 10 despite benchmark wins. For pair-programming precision or code review where you need one correct solution on the first attempt, Claude Opus 4.5’s proven coding workflows may outperform despite lower benchmark scores.

Dynamic sub-agent coordination introduces consistency risks without predefined roles—no quantified error rates or timeout data exist to assess failure modes. Agent Swarm remains in beta, available only to paid users, with limited real-world validation beyond internal testing.

Early adopters describe creative output as “not too bad,” which suggests room for improvement rather than production-ready quality. The experimental video input capability remains unproven at scale with no production video-to-code success stories.

Consumer hardware efficiency is completely unknown—the 1.04 trillion parameter model likely requires enterprise GPU infrastructure, not the RTX 4090 setups many developers run locally. Deploying beta-stage Agent Swarm in production without IT approval mirrors the unauthorized AI adoption risks that plague enterprises—coordination failures could expose sensitive data or generate compliance violations.

If you’re building production systems requiring deterministic outputs like financial calculations, medical coding, or legal document generation, single-agent Claude or GPT may outperform despite lower benchmark scores.

Kimi K2.5 shines in exploratory, parallel tasks like research synthesis or multi-source data aggregation where approximate answers delivered quickly matter more than perfect precision. But without production validation, you’re essentially beta testing at scale.

When to Choose Kimi K2.5 Over Claude or GPT?

Wide-search research tasks represent Kimi K2.5’s strongest use case.

Competitive analysis across 50+ websites, literature reviews, or market research benefit directly from swarm parallelism’s 4.5x speed gains. Multi-file codebase debugging leverages the 76.8% SWE-bench Verified score plus swarm coordination to handle complex refactoring across 10+ files simultaneously.

Video-to-code workflows offer a unique capability—upload Loom bug reports and generate fixes from visual context—that text-only models can’t match. Browser automation showed 80% runtime reduction in internal testing, making web scraping, form filling, and UI testing at scale viable applications.

Avoid Kimi K2.5 for single-prompt precision tasks where Claude Opus 4.5 excels: pair-programming, code review, or technical writing requiring one correct solution on the first attempt.

Deterministic outputs for financial calculations, medical coding, or legal document generation carry too much coordination risk—approximate answers aren’t acceptable in these domains. Budget-constrained projects should consider that $3.00 output pricing exceeds Chinese alternatives like DeepSeek V3 at $0.60-$1.75. Consumer hardware deployment isn’t viable with 1.04 trillion parameters requiring enterprise infrastructure, ruling out edge or mobile applications.

For API provider selection, choose Fireworks for speed-critical applications needing 185 tokens per second output and 0.49 second time-to-first-token—best for real-time chat or interactive coding.

Kimi native offers direct access with potential volume discounts for batch processing. Maximizing swarm coordination requires prompt optimization strategies that clearly define subtask boundaries—vague instructions amplify coordination failures across 100 agents.

Start with 10-20 agents and scale only if task complexity justifies the overhead. Use Instant mode for simple queries, reserving Thinking mode for complex reasoning to reduce token consumption. Batch similar tasks to amortize coordination overhead across multiple requests.

If you’re an AI engineer building agent systems, experimenting with Kimi K2.5 now develops the AI engineering skills that will define competitive advantage in 2026—early adopters shape the tools, not just use them.

But for production deployments, wait for documented case studies and failure mode data before committing critical workflows.

Verdict: Benchmark King, Production Wildcard

Kimi K2.5 delivers genuine innovation in agentic AI with its 50.2% HLE score, 4.5x speed gains through Agent Swarm, and roughly 1/8th Claude Opus 4.5 pricing through efficient providers.

The technical architecture is impressive: 100-agent coordination, 1,500 tool calls per task, and 256K context windows represent meaningful advances in autonomous system design. But production readiness lags benchmark performance significantly. Zero GitHub repositories, developer testimonials, or measurable time-saved metrics exist as of January 28, 2026.

If you need parallel research or data aggregation, Kimi K2.5 via Fireworks justifies the learning curve with genuine speed gains. For single-prompt coding precision, stick with Claude Opus 4.5 or GPT-5.2—the 7/10 production rating suggests Kimi isn’t ready for mission-critical development.

Solo developers and startups should wait for production case studies rather than beta testing at scale. Watch for version 1.1 patches addressing coordination failures, monitor Anthropic and OpenAI responses (no Claude or GPT counters emerged in the last 2-3 weeks), and track multi-provider pricing as Together.ai integration could unlock cost optimization. Expect production case studies within 60-90 days as current adoption generates real-world data.

Kimi K2.5 proves open-source can match closed-source on benchmarks. Whether it can match on reliability is the question Moonshot AI needs to answer in 2026. The benchmark wins are real, but production deployment requires evidence beyond test scores—and that evidence doesn’t exist yet.

Leave a Reply