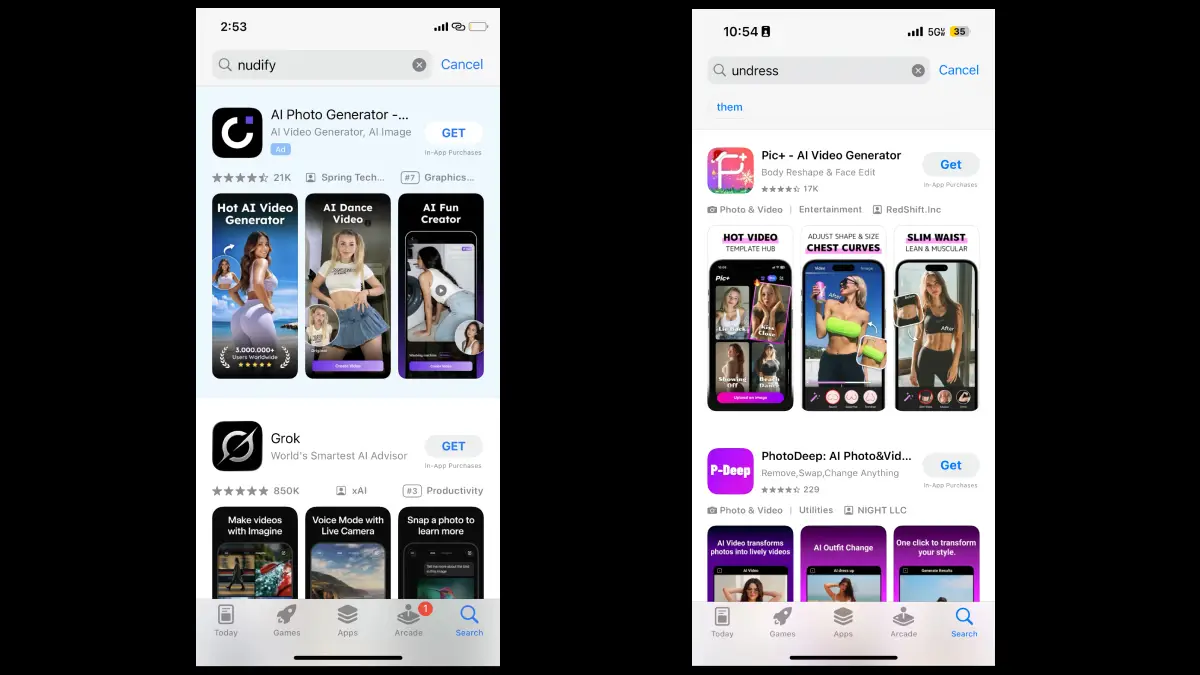

Recent investigations have uncovered dozens of mobile applications available on major app stores that use artificial intelligence to digitally remove clothing from photos, exposing a troubling breach in moderation policies.

Despite repeated claims of strict oversight by leading tech platforms, the ongoing presence of such apps highlights persistent gaps in content control and raises questions about the alignment between company policies and real-world enforcement.

What type of apps are at the center of controversy?

A range of applications has come under scrutiny due to their capacity to generate sexualized images using advanced algorithms.

These services typically allow users to upload ordinary photos, after which the software reconstructs an altered image where the subject appears undressed—a clear deviation from standard ethical boundaries found on mainstream platforms.

The majority of these programs fall into two main categories. Some employ sophisticated ‘nudification’ models capable of processing fully clothed images and outputting manipulated results.

Others leverage what is commonly known as face-swapping technology, extracting faces and superimposing them onto unrelated nude bodies sourced from extensive databases or generated by AI. Both types attempt to remain accessible despite apparent violations of guidelines established by hosting marketplaces.

How do app stores claim to protect users?

In principle, major platform operators assert that they maintain rigorous standards to vet applications before public release. According to official statements from these tech giants, the primary goal is to ensure safety, quality, and respectability across all distributed offerings.

Developers aiming to publish must adhere to detailed requirements, theoretically minimizing potential harm or abuse tied to inappropriate content.

Despite these rules, investigative efforts reveal substantial discrepancies between stated principles and actual marketplace practices. Several applications providing explicit undressing features manage to bypass initial review steps, sometimes through ambiguous descriptions or rapid updates following approval.

This undermines assurances given to both customers and regulators regarding proactive risk management.

Why have monitoring efforts fallen short?

The challenge extends beyond simply identifying software that openly advertises its problematic capabilities. Many app descriptions are intentionally vague, avoiding obvious trigger words while embedding prohibited functions deep within the user experience.

Automated systems and human reviewers alike can miss nuanced signals, especially as developers grow more adept at evading detection.

This loophole allows such applications to circulate widely until flagged by third parties or advocacy organizations. Often, by the time remedial action takes place, significant harm may have already spread—particularly given the rapid environment of digital downloads.

What risks do these technologies introduce?

The consequences go far beyond breaches of store policy. Undressing apps exploit unsuspecting individuals by producing synthetic sexual imagery without consent. This practice not only damages reputations but also inflicts lasting psychological impacts, especially among younger victims or those targeted in harassment campaigns. The accessibility of these tools lowers barriers for malicious actors, creating fertile ground for widespread digital abuse.

Cases in which seemingly harmless personal photographs are weaponized by strangers or acquaintances reveal a darker aspect of the AI boom. While often framed as novelty entertainment, the irreversible effects on privacy and dignity draw grave concern from legal experts, social commentators, and rights defenders worldwide.

Comparing undressing apps and face-swap technologies

While both approaches manipulate visual identity, notable differences emerge in operational focus. Undressing apps directly simulate unclothed appearances based on uploaded images, often preserving body shape and posture for heightened realism. In contrast, face-swapping software matches facial characteristics to stock or artificially created nudes, sometimes sacrificing coherency for broader flexibility.

Both techniques foster harmful outcomes, though undressing AI tends to feel more invasive due to its direct alteration of genuine photographs. The shared result remains the unauthorized distribution of intimate likenesses.

Broader implications for digital transparency

The persistence of these controversial applications, despite repeated warnings, puts existing regulatory and technical safeguards under scrutiny. Watchdog groups consistently advocate for stricter supervision, seeking mechanisms addressing both overt violations and subtle workarounds. However, the rapid advance of generative technologies makes staying ahead increasingly complex.

As scrutiny intensifies, calls grow for clearer communication between app stores, developers, and stakeholders regarding what constitutes ethical innovation versus predatory behavior. Transparency reports, user education initiatives, and partnerships with external monitors all feature in evolving solutions under consideration.

Pushing for change: current challenges and future directions

Momentum is building toward enhanced accountability within digital marketplaces. Policy shifts, improved vetting processes, and greater reliance on AI-driven flagging systems rank high among proposed interventions. Simultaneously, conversations around user empowerment—including easy reporting interfaces and robust privacy settings—are gaining traction.

Some industry observers note that addressing these issues will require not just procedural fixes but also cultural changes among companies prioritizing long-term trust over short-term gains. Anticipating new forms of abuse means adapting rules, investing in staff training, and maintaining open dialogue with affected communities.

Leave a Reply