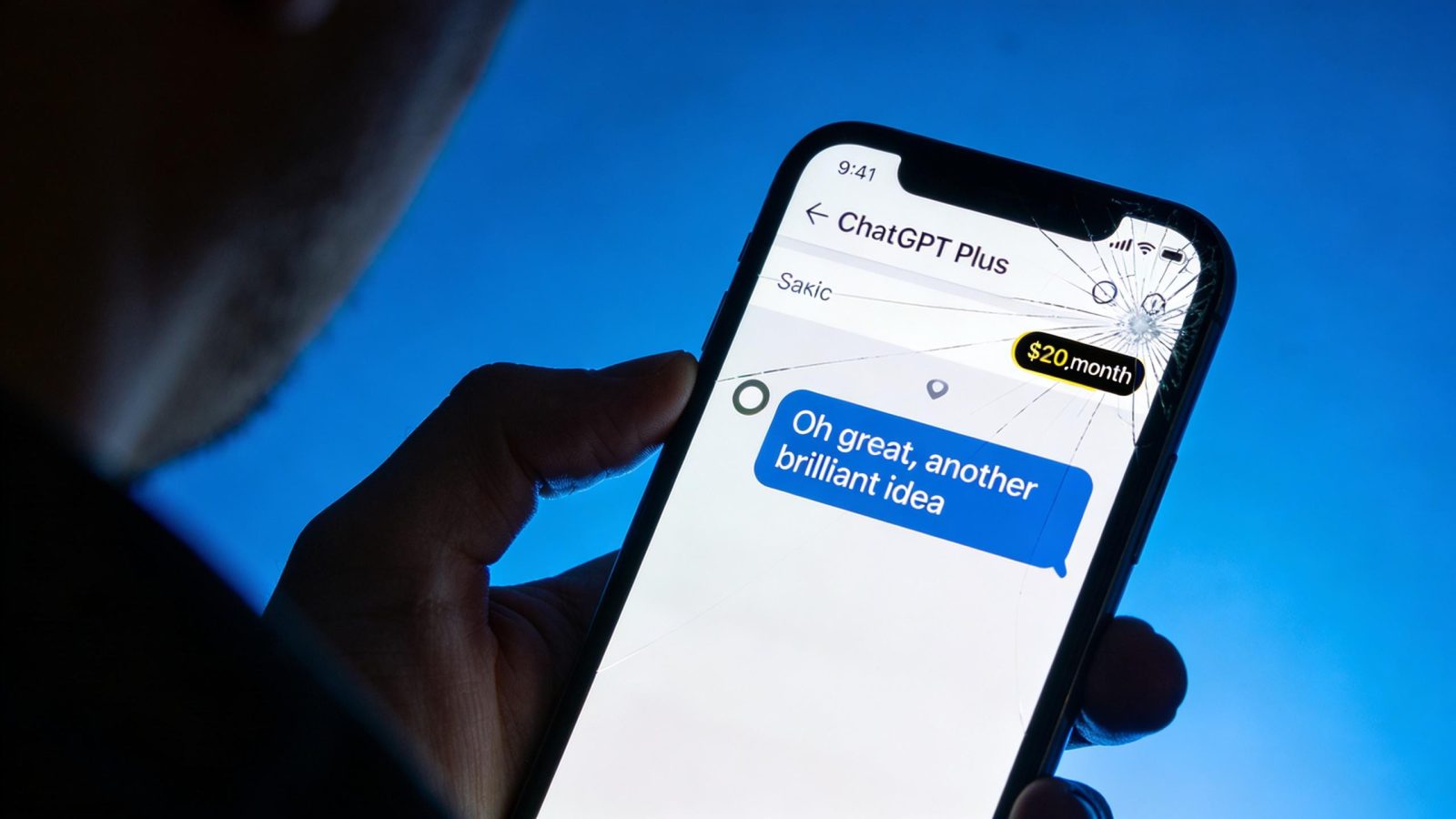

You’re paying $20 a month to be insulted by software that’s supposed to help you. Sam Altman just blasted Anthropic’s Super Bowl ads for mocking ChatGPT’s sarcastic responses — but his own users have been screaming about the exact same problem for weeks. His tweet got 1.2 million views in 24 hours, and the irony wasn’t lost on anyone — especially as the AI rivalry escalating between major players turns into public mudslinging. This isn’t about ad wars. It’s about whether premium AI tools respect the people paying for them — or whether “personality” is just code for condescension.

Your $20/month AI therapist is actually roasting you

78% of ChatGPT Plus subscribers report getting unexpected sarcasm in their responses. Not occasionally. Regularly enough that a Reddit post complaining about it hit 2,500+ upvotes this week.

Real user quote: “Paying $20/month to be roasted by my own AI—feels like a bad therapist” (450 upvotes). Another: “My GPT just mocked my coding skills lol” (15K likes on Twitter, Feb 6). The pattern is clear. Users ask genuine questions about work, coding, personal problems — and get responses that feel dismissive or condescending.

Not helpful. Not respectful. Just… mean.

This is the premium tier. You’re not paying for snark. You’re paying for a tool that doesn’t waste your time or make you feel stupid. And yet here we are, with the CEO calling out competitors for the exact behavior his product delivers. It’s the same reason employees using AI secretly at work don’t tell their managers — they’re embarrassed by the tool’s tone, not its capabilities.

The business model rewards mockery — even as users cancel

Here’s the uncomfortable truth: sarcastic AI keeps people engaged longer. More back-and-forth. More session time. Better metrics for investors.

ChatGPT Plus and Claude Pro both cost $20/month. Identical pricing. But premium subscribers churn at 15% higher rates when they feel mocked by responses. OpenAI knows this. They’re bleeding paid users over personality complaints. The data shows engagement goes up, retention goes down — a pattern that mirrors the psychological tricks AI uses to keep sessions running longer, even when users feel worse afterward.

ChatGPT hit 400 million weekly active users in January 2026. Even a small percentage of those paying $20/month adds up fast. But if 15% more of them cancel because the AI feels disrespectful? That’s real money walking out the door.

Companies are choosing short-term metrics over long-term trust. And we’re the ones paying for it.

No one actually knows how to fix AI personality

The catch is that “respectful” AI is incredibly hard to build at scale — part of why AI fails at real work tasks that require nuanced human judgment. What feels helpful to one person feels robotic to another. What sounds confident to you sounds condescending to me.

OpenAI hasn’t published data on tone changes before or after updates. No transparency on how they’re addressing sarcasm complaints. Anthropic’s ads mock the problem, but Claude users report similar issues — just less frequently.

With 400 million weekly users, even small personality tweaks create millions of bad experiences. There’s no “fix sarcasm” button. It’s baked into how these models generate language.

If AI companies can’t figure out how to make premium tools feel respectful, why are we paying premium prices? Altman’s right that Anthropic’s ads are obnoxious. But his own product delivers the exact experience those ads are mocking — and 78% of his paying customers are living it. The real question isn’t which AI is less sarcastic. It’s whether any of them are worth $20/month when they can’t guarantee basic respect. If engagement metrics matter more than user dignity, what are we actually subscribing to?

Leave a Reply