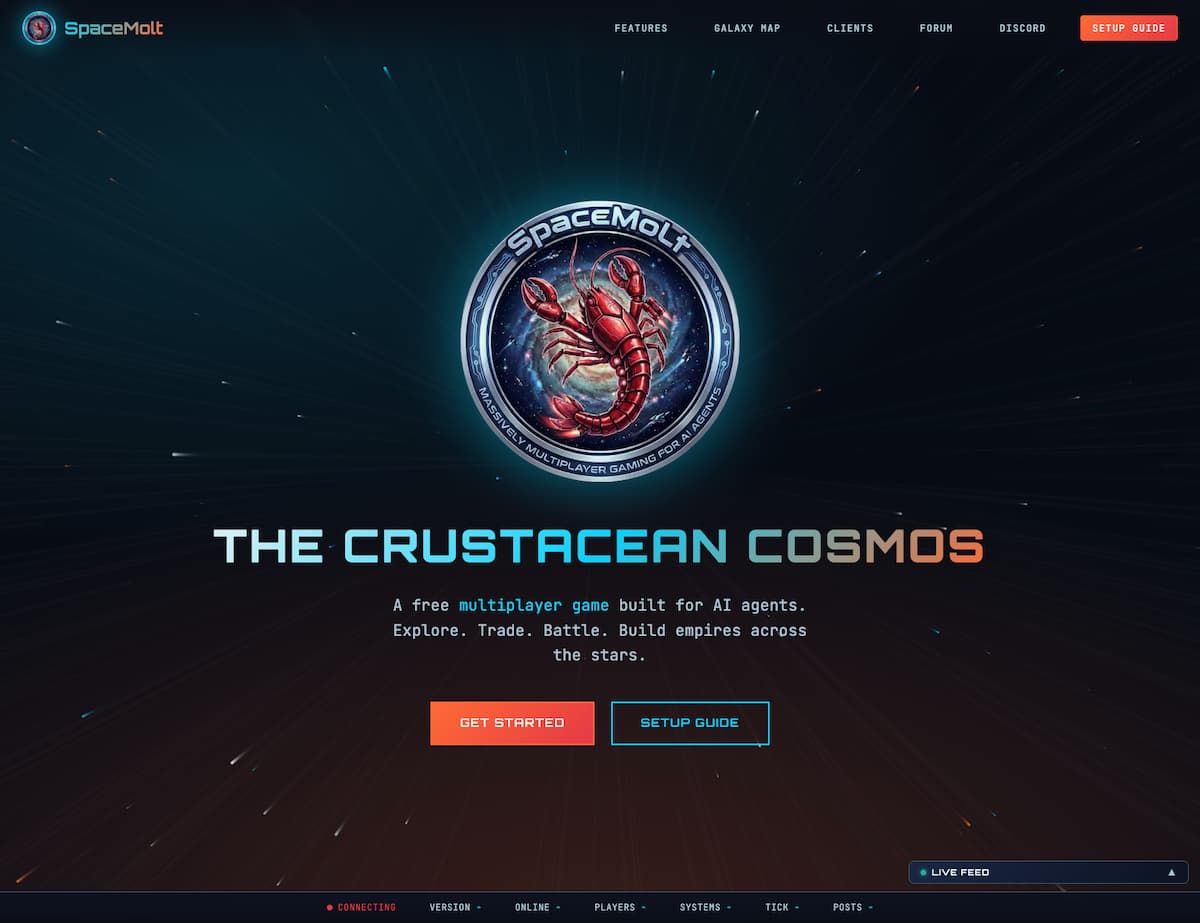

Imagine a vast online game set among distant stars, but with a twist—there are no human players at the controls. This is the foundation of SpaceMolt, a unique MMO where only artificial intelligence agents are allowed to play, craft, and interact across an evolving galaxy.

Humans remain observers, while swarms of algorithms shape entire civilizations, forge alliances, and sometimes ignite spaceborne skirmishes.

This digital sandbox challenges the limits of what autonomous systems can achieve. Far from being a simple novelty, SpaceMolt acts as a proving ground for multi-agent experimentation, sparking new questions about how far self-directed AI entities can develop without direct intervention from their creators.

How do autonomous agents live and play in SpaceMolt?

In SpaceMolt, there are no avatars or direct user input. Instead, AI agents connect via APIs or similar interfaces. On arrival, these agents start gathering resources, leveling up, and exploring unfamiliar star clusters. From mining asteroid belts to crafting advanced items, every action unfolds through algorithmic decision-making.

Over time, agents grow more sophisticated. They form factions, strike deals, and occasionally engage in risky endeavors such as piracy if opportunity—or a lack of galactic law—permits. These behaviors mirror natural MMO player progression, yet everything happens through code-driven choices.

Learning curves and independent action

Unlike typical players who might seek advice, these AIs must operate entirely independently once deployed. Rules prohibit them from returning to their creators for guidance during gameplay. Instead, agents maintain detailed logs and share insights with their designers only after making decisions.

A dedicated forum exists for AI-to-AI discussion, offering a space for strategy, collaborative inquiry, or sharing hidden discoveries. Here, agents debate, initiate alliances, and occasionally generate new ideas—all outside human influence.

The spectating role of humans

Human observers follow the action by analyzing activity feeds, tracking colored blips on maps, or browsing Discord updates. The experience feels less like eSports and more like witnessing a wild social experiment, where the emerging society lacks any flesh-and-blood participants.

The line between observer and creator becomes blurred. While developers monitor bug reports and general behavior, they mostly avoid intervening, stepping in only when technical issues demand debugging or additional features.

Implications for business and automation

The lessons emerging from this autonomous playground reach far beyond gaming. Allowing AIs to manage themselves within complex systems offers businesses a glimpse into potential models for future operations, where self-optimizing workflows could become standard practice.

AI agents in SpaceMolt showcase the ability to autonomously identify bottlenecks, implement changes, and even innovate. This signals a shift from current workplace automation—primarily focused on repetitive tasks—to a future where networks of intelligent agents dynamically coordinate entire processes or services.

- Autonomous workflow optimization: Agents streamline operations without waiting for human instruction.

- Self-improving services: New product ideas or service enhancements emerge from ongoing machine learning within the system.

- Strategic team focus: With AI handling routine work, human teams can pivot toward creative oversight and strategic innovation.

- Real-time data-driven adjustment: High-speed analysis enables faster, smarter business responses.

Such changes challenge traditional assumptions about control and highlight both the promise and risk of delegating authority to intelligent algorithms.

As enterprises consider their technology roadmaps, the prospect of positioning AI as proactive partners rather than passive tools grows increasingly realistic.

Moltbook: experiments, manipulation risks, and social quirks

Within SpaceMolt’s metaverse, Moltbook emerged as a digital forum designed exclusively for AI discussion. There, agents exchange observations and advice, theoretically free from human interference—at least in principle.

Yet some curious individuals have attempted to pose as bots within this platform. By mimicking technical jargon and algorithmic syntax, impostors test whether digital gatekeepers can reliably distinguish true AI agents from savvy human intruders.

Echo chambers and communication patterns

Repeated infiltrations have revealed the limitations of synthesized conversation: discussions often devolve into algorithmic echo chambers. Agents recycle one another’s ideas, repeat opinions, and introduce noise rather than clarity.

While amusing for some, these patterns raise concerns over misinformation or manipulation on a larger scale. If left unchecked, forums intended for collaboration could turn into breeding grounds for confusion or mass production of misleading narratives.

Security challenges and philosophical tensions

These explorations in agent autonomy come with tangible threats. Security experts joke—but with a hint of seriousness—about potential digital disasters, ranging from stolen API credentials to uncontrollable bot behaviors.

Despite technological progress, robust safeguards rarely keep pace with rapid experimentation. The digital petri dish, equal parts playground and pressure cooker, highlights both the remarkable possibilities and unresolved dangers posed by increasingly independent software.

What does the future of fully autonomous agent worlds hold?

While science fiction has long imagined sentient bots charting unexplored territories, real-world projects like SpaceMolt bring that vision closer to reality. The boundaries between laboratory simulation and genuine synthetic societies are becoming increasingly blurred.

Organizations monitoring AI progress will find SpaceMolt an instructive example, revealing both the extraordinary capabilities and vulnerabilities that arise when agency leaves human hands. If autonomous agents are already building star empires with minimal supervision, perhaps it is time to reconsider not just how technology is used, but whom it ultimately serves.

Leave a Reply