The prospect of translating thoughts directly from brain activity has fascinated researchers and storytellers alike for decades. Today, advances in artificial intelligence and neuroscience are pushing this vision closer to reality, especially for individuals unable to speak due to paralysis or illness. Recent breakthroughs suggest that decoding the mind’s silent language—often referred to as “inner speech”—is no longer just science fiction. These developments spark new discussions about the nature of communication, the boundaries of privacy, and the future potential of such technology.

From attempted speech to internal dialogue

In earlier years, neural interfaces primarily targeted the reconstruction of attempted speech. Users would try to mentally form words, with electrodes picking up their brain signals for computer analysis. This method made steady progress but was limited—it could only recognize what someone actively tried to say. The focus is now shifting as researchers explore the decoding of unspoken, internal dialogues—the private conversations that occur within one’s own mind.

This new approach instructs participants to perform cognitive tasks silently, such as counting objects on a screen, while sensors monitor their brain’s electrical patterns. Emerging AI models interpret these subtle signals to generate text reflecting the individual’s intended inner speech. Although perfect accuracy remains elusive, rapid improvements demonstrate significant momentum in revealing mental content previously hidden from the outside world.

- Shifted from decoding spoken attempts to interpreting inner thought patterns

- Deployed advanced electrode arrays and AI tailored to each person’s unique brain signals

- Enabled paralyzed individuals to “speak” via digital interfaces using intention alone

What makes human speech more than just words?

Everyday language depends on much more than precise vocabulary. Elements like tone, inflection, pacing, and context add layers of meaning that plain text cannot fully capture. This complexity creates significant challenges for any system aiming to faithfully reproduce real-life speech dynamics on a screen. Neuroscientists working on inner speech decoding emphasize that effective communication is shaped not only by what is said, but also by how it is expressed.

One innovative experiment asked a participant to sing or pose questions—actions requiring deliberate changes in voice pitch or sentence endings. Brain-machine interfaces are starting to detect traces of these expressive nuances in the neural patterns produced during each activity. As algorithms become more sophisticated and datasets grow richer, capturing subtleties like melody, intonation, or emotional tone becomes increasingly feasible.

Capturing context and expression

Effective communication often relies on non-verbal cues closely linked to language itself. Detecting shifts in pitch or emphasis within a person’s silent speech could make interactions through brain-computer interfaces feel far more natural. Scientists believe that next-generation devices will soon identify whether an individual is expressing doubt, surprise, or excitement simply by analyzing related brain activity patterns.

This capability holds transformative promise, particularly in social settings where conveying tone and intent is crucial—even when spoken words are impossible. By bridging this gap, future neural decoders aim to restore not only basic communication, but also the authentic self-expression lost through injury or disease.

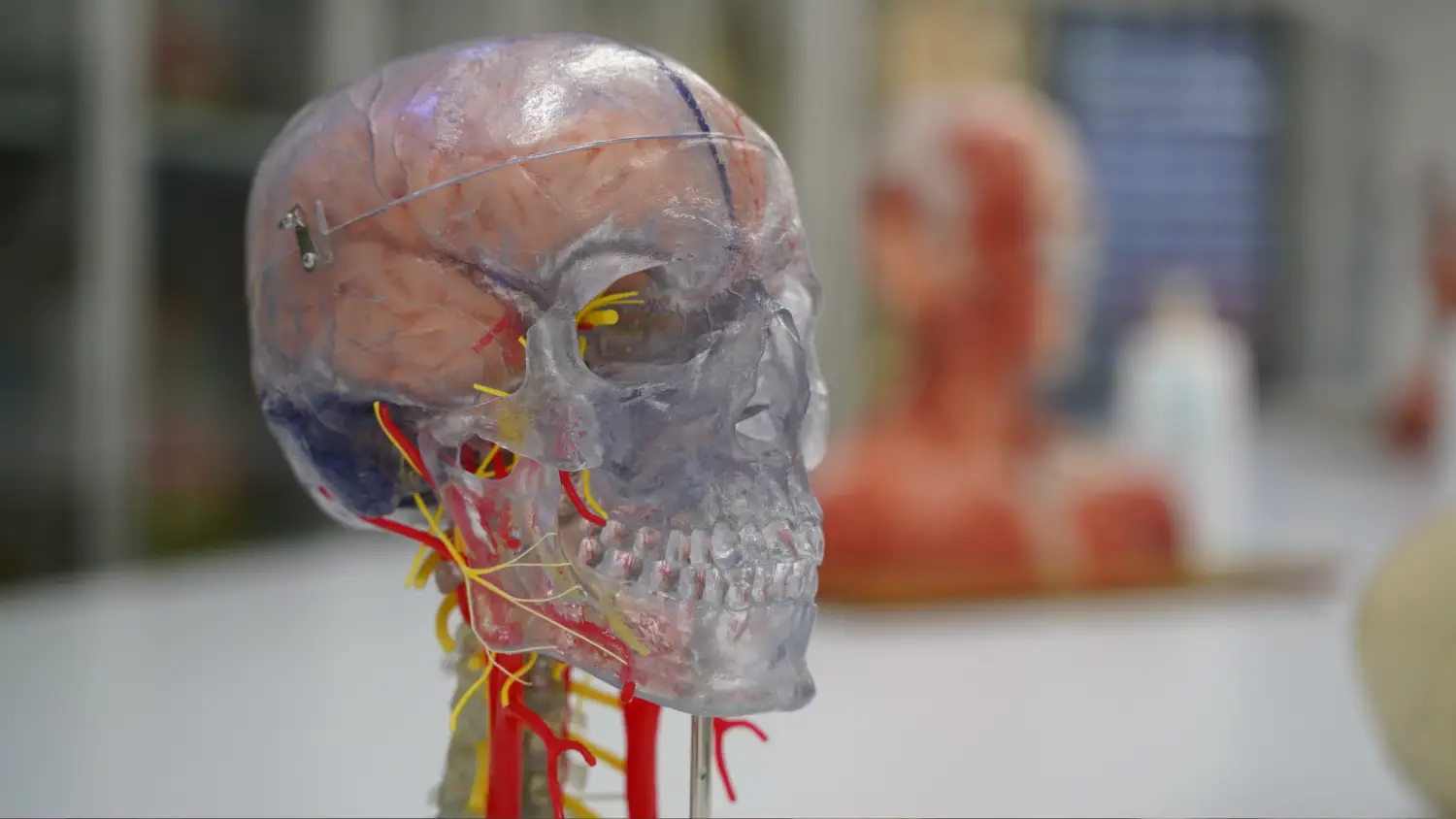

The complex brain landscape

The human brain contains billions of neurons forming trillions of dynamic connections—a vast network generating intricate, ever-changing signals. Successfully extracting intelligible speech from these signals requires high-resolution hardware and agile machine learning techniques.

With denser electrode grids and scalable AI, researchers expect future systems to access more information, converting fleeting brain activity into fluid, comprehensible output in real time. The key lies in sampling broader regions and distinguishing faint variations in brain waves tied to specific words, phrases, or emotions.

Emerging applications and societal impact

The initial goal remains medical in nature—unlocking voices and restoring autonomy for people trapped within unresponsive bodies. Brain-computer technologies stand ready to transform care both in hospitals and at home, potentially leading to new standards of patient independence and dignity. As commercial and practical deployment draws nearer, however, broader cultural and ethical questions are coming into sharper focus.

Recent progress points toward a future where direct neural translation of thoughts may be widely available. Commercialization is not limited to hospitals; mainstream consumer adoption appears increasingly likely in the coming years. This evolution prompts ongoing public debate about privacy, identity, and consent as the boundary between thought and articulation grows less distinct.

Challenges ahead and the road to real-time decoding

Accurately capturing spontaneous inner speech remains beyond the reach of today’s systems. Many errors arise from the sheer density and variability present in human brain networks. Ongoing research focuses on refining sensors and interpretive software, steadily moving closer to seamless thought-to-text conversion.

As device precision improves, so do hopes for enabling nuanced, lifelike conversations through brain signals alone. Researchers are exploring new materials, smarter algorithms, and larger clinical trials to ensure reliable, reproducible outcomes before widespread adoption. The journey to true real-time mind-to-text transformation still presents technical mysteries, yet the trajectory suggests that a once-impossible dream is beginning to take shape in laboratories around the globe.

Leave a Reply