With the swift advancement of artificial intelligence, telling apart a genuine video from one crafted by sophisticated algorithms has become a real challenge. Sometimes it’s obvious like this animal Rebellion AI clip, sometimes it can be tricky…

Online platforms are now saturated with hyperrealistic scenes produced by tools that grow more convincing each month.

Whether appearing in social media feeds or on short-video platforms, learning how to recognize these creations is now crucial—not just out of curiosity, but as a defense against misinformation and digital manipulation.

Clues before watching the video

Even before pressing play, several signals outside the footage itself can suggest a potential AI origin. These details serve as an initial checkpoint for anyone seeking to verify the authenticity of what will be viewed.

Watermarks, labeling systems, and metadata sometimes provide compelling evidence about a video’s origins. However, as detection methods evolve, so do the techniques used to bypass them, making constant vigilance increasingly necessary.

Searching for watermarks and subtle signatures

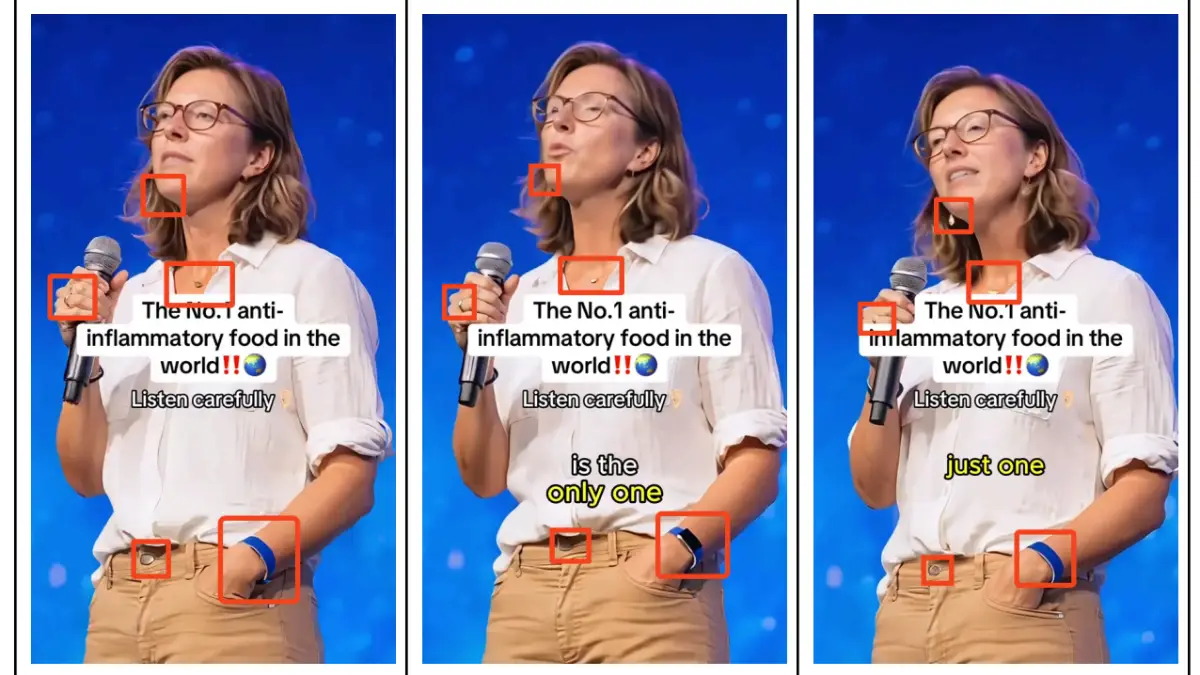

Most mainstream AI video generators embed their own identifiers—often called watermarks—into each video they produce. Typically found in corners and sometimes visible only momentarily, these marks can be hidden through cropping, blurring, or editing software designed to erase any direct signs of machine creation.

A thorough inspection involves pausing videos at various moments and examining the borders of each frame. Occasionally, a hidden watermark appears, particularly during scene transitions or rapid image shifts. It is also worth noting that some watermarks might move across frames in an effort to avoid easy detection.

Reading descriptions, hashtags, and account biographies

Certain uploaders practice transparency, explicitly tagging their content as AI-made with clear mentions such as #aigenerated or similar labels. Examining the text accompanying a video—including the creator’s biography—may reveal admissions of algorithmic assistance. Still, this approach mainly uncovers honest actors, not those intent on misleading audiences.

It remains common for users to reference both the technique and specific tools employed within their descriptions and tags. This habit increases traceability among communities enthusiastic about generative art but offers little help where deception or digital trickery is involved.

Recognizing official moderation labels

Many major online platforms have started flagging synthetically produced videos with overlay notices like “AI Generated” or “Contains AI content.” These warnings rely either on voluntary disclosure by the uploader or automatic systems that inspect video file metadata for clues pointing to synthetic origins.

Unfortunately, these safeguards are far from perfect. When creators modify videos using third-party programs before uploading, they often remove telltale metadata, circumventing automatic flags. Additionally, differences between platform versions may result in labels being visible only on certain devices. Unless every protective layer works flawlessly, vulnerabilities persist.

Indicators found within the video itself

If no explicit admission is present, visual analysis becomes key. Deep learning has brought realism to new heights, yet persistent issues remain—especially when depicting complex human forms or readable text.

Careful viewers can frequently detect inconsistencies in anatomy, movement, texture, and even basic written language on everyday objects in videos, providing tangible proof of artificial intervention.

Spotting anomalies in hands, limbs, and motion

Despite significant progress, current AI models still struggle with the intricate shapes and movements of human hands. Typical flaws include unnatural numbers of fingers, fused digits, disappearing or morphing fingernails, or anatomical oddities such as reversed thumbs. Observing hand gestures in slow motion or pausing during critical movements can expose these errors.

Similarly, arms and legs often pose challenges for algorithms, resulting in twisted joints, merging limbs, or body parts vanishing behind objects without logical reappearance. These phenomena contrast sharply with natural human anatomy, serving as reliable markers of synthetic origin.

Noticing issues around facial features and speech

Eyes are among the most difficult elements for AI to replicate convincingly. Inconsistent iris shapes, shifting pupil colors depending on angle, or eyes lacking realistic reflections undermine overall credibility. Blinks may be missing or occur too rhythmically. Teeth also present challenges—sometimes AI depicts mouths as a continuous white block rather than individual teeth.

Checking lip-sync accuracy offers another clue: if speech and mouth movements do not align, the likelihood of computer generation increases. While rare in top-tier productions, such slips still appear often enough to alert attentive viewers.

Examining texts, logos, and signage inside the frame

Synthetic generation reliably struggles with embedded text—whether on shopfronts, billboards, clothing tags, or magazine covers. What initially looks like standard writing may, upon closer inspection, dissolve into nearly legible characters filled with random symbols or incorrect spacing.

Brand logos provide another effective test. Letters can deviate from original designs, break sequence, or display irregularities unlikely to pass corporate quality control. Whenever malformed or nonsensical scripts appear in otherwise familiar settings, suspicion of algorithmic output should rise.

Rapid checklist for identifying deepfake or AI-produced videos

For practical reference, here is a quick guide to evaluating suspicious video content:

- Pause regularly to check for hidden or masked watermarks around the frame edges.

- Review video descriptions and user profiles for transparent disclosures or AI-related tags.

- Look for official notifications or badges applied by the hosting platform.

- Inspect hands, fingers, and body movements for unnatural transitions or anatomical mistakes.

- Observe eye appearance, teeth, and synchronized lip movements in talking-head segments.

- Examine any onscreen textual element for gibberish, misspellings, or altered brand logos.

| Category | Human video | AI-generated video |

|---|---|---|

| Hands & fingers | Natural, proportional, logical moves | Unusual numbers, fused or missing segments |

| Facial expressions | Subtle reflexes, imperfect symmetry | Mechanical blinking, odd eyes or teeth |

| Text in frame | Legible, consistent with surroundings | Jumbled, misspelled, unfamiliar fonts |

| Audio sync | Lip movement matches audio | Speech out of sync with lips |

Contextual analysis and pattern recognition

Beyond technical evidence, broader context provides valuable insight. Accounts that consistently post cinematic, color-saturated clips featuring only staged scenes deserve extra scrutiny. Genuine pages tend to capture ordinary daily events, while algorithm-driven channels often maintain a predictable aesthetic throughout their entire feed.

Investigating publication history can also help. If stylized errors or recurring patterns stretch back for months, it suggests a long-standing commitment to posting AI-crafted material. Recognizing these trends, alongside technical clues, empowers anyone keen to navigate today’s complex landscape of digital content.

Leave a Reply