OpenAI cut thinking times in its GPT-5.x models on January 10, 2026, choosing speed over depth after usage data showed people click faster responses, not smarter ones. Three months later, GPT-4o retires completely, forcing millions of users onto models optimized for the behavior they exhibit—not the capability they pay for.

And nobody published the accuracy data.

OpenAI just forced millions onto faster AI—and nobody knows what they’re losing

The model retirements aren’t routine deprecation. GPT-4o dies completely after its final retirement window, along with GPT-4.1, GPT-4.1 mini, and o4-mini. The only path forward is GPT-5.x models with reduced Standard and Light thinking times—a change OpenAI made because “users prefer faster responses.”

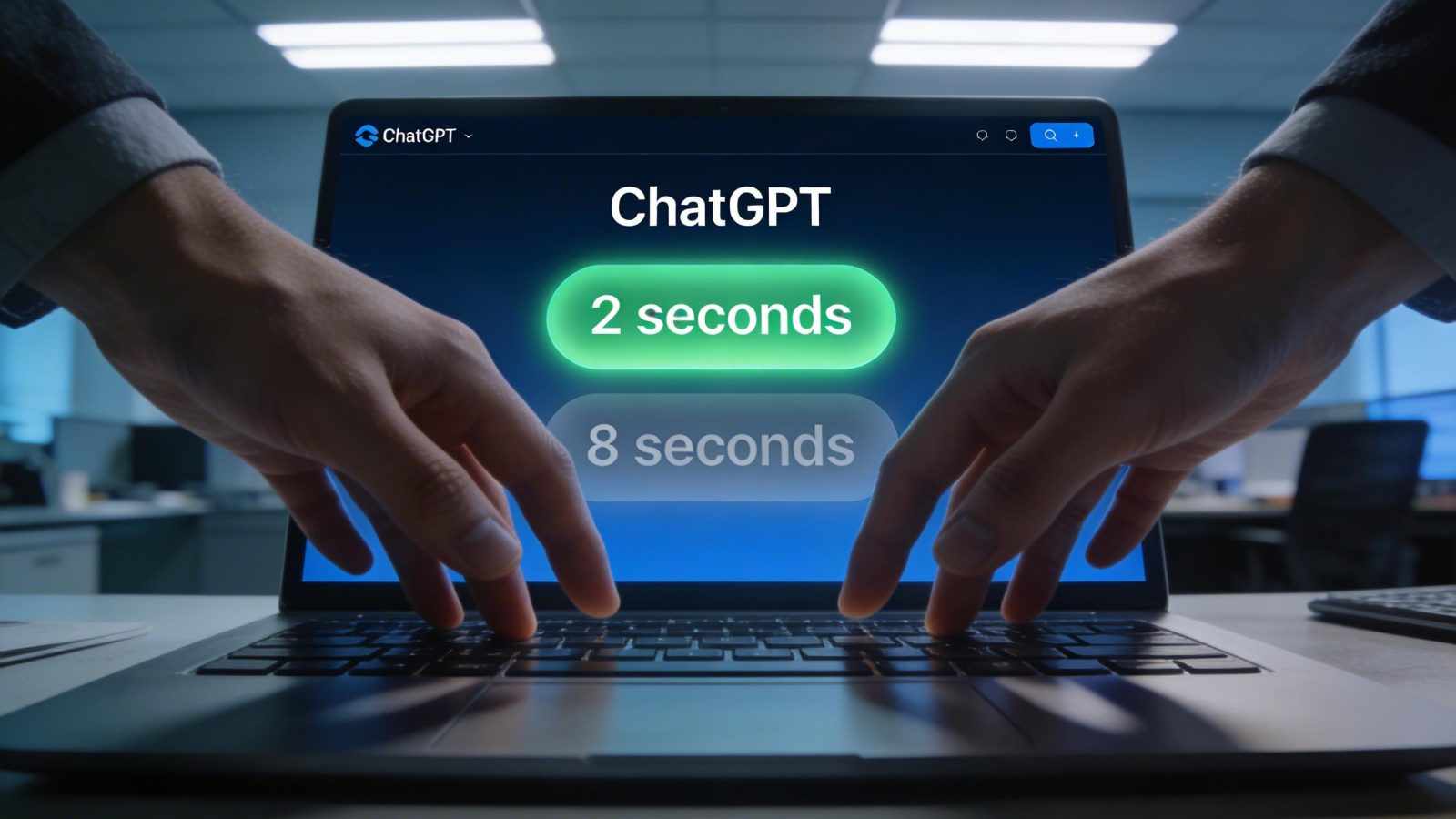

That’s the whole pitch. Users wanted 2-second answers instead of 8-second thoughtful ones, so OpenAI optimized the entire product line for latency. But they never published benchmarks comparing old versus new thinking times on complex reasoning tasks—math, coding, multi-step logic. You’re getting faster AI. Whether it’s shallower AI is something OpenAI apparently decided you don’t need to know.

This is part of a broader shift that’s quietly changing how we use the internet—prioritizing instant gratification over thoughtful interaction. OpenAI saw the click data and made a rational business decision. The problem is rationality and intelligence aren’t the same thing.

The February 4 reversal reveals what OpenAI won’t say about reasoning quality

Three weeks after cutting thinking times, OpenAI restored GPT-5.2 Thinking to prior levels on February 4, 2026, calling the reduction “inadvertent.” That’s not a routine update—that’s damage control.

The speed cuts likely broke something critical. Enterprise customers paying $20/month for Pro tier expect thoughtful reasoning, not ChatGPT sprinting through complex prompts to hit a latency target. OpenAI walked back Extended mode but left Standard and Light optimized for speed. The message: if you want depth, pay more. If you’re on the free or Plus tier, you get the version optimized for what users click, not what they need.

Meanwhile, competitors aren’t following OpenAI’s speed-first strategy. The same quality-versus-speed debate that has developers turning against Claude Code is now hitting ChatGPT’s core reasoning models. And the honest trade-off is this: speed optimization makes sense for casual users asking “summarize this email,” but OpenAI is applying the same latency targets to complex reasoning tasks that justified premium pricing in the first place.

No public data exists on how the January 10 cuts affected accuracy. Just a February 4 reversal for one tier, and silence on the rest.

The capability cuts compound—and users are caught in the crossfire

OpenAI isn’t just optimizing for speed. They’re simultaneously restricting capabilities through age-aware safeguards introduced alongside model retirements. The four thinking modes—Light, Standard, Extended, Heavy—give Plus and Pro users control over depth, but those controls don’t help when the underlying models are optimized for metrics that conflict with thoughtful output.

ChatGPT is simultaneously getting faster, more restricted, and potentially less accurate. As models optimize for speed over depth, the skills that matter in 2026 aren’t about prompt engineering—they’re about knowing when AI is giving you a fast answer versus a correct one.

OpenAI optimized for the behavior users exhibit rather than the capability users pay for. The question isn’t whether AI will get faster—it’s whether anyone will notice when it stops getting smarter.

Leave a Reply